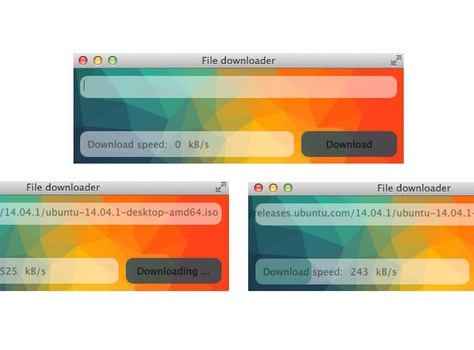

There is a many ways to checksum the file, and many available ready to use programs. Sometimes there is a need to integrate a checksum into your app. Most of the time we want to checksum the file to detect a corruption after the copy, move or download file.

Luckily python provides hashlib module that implements many different secure hash and message digest algorithms. Among them we can find MD5 algorithm implementation. It have been widely used in the software world as a way of checking if the given files are identical or if the transferred data was saved without corruption.

MD5 is one of the most common ways of checksum in the web world. Almost each web server will return or provide a way to fetch MD5 checksum for given file that is allowed to download.

Usage

hashlib MD5 interface is really straight forward. Let's see how we can checksum a string first

>>> import hashlib

>>>

>>> string = "Hello world with hashlib.MD5\n"

>>> md5_check = hashlib.md5()

>>> md5_check.update(string)

>>>

>>> md5_check.digest()

'6\xb7\xf7\xe7\x82\x98\x94\x88O\x1d\x9ak\x19\xb8\xbb\x8c'

>>> md5_check.hexdigest()

'36b7f7e7829894884f1d9a6b19b8bb8c'

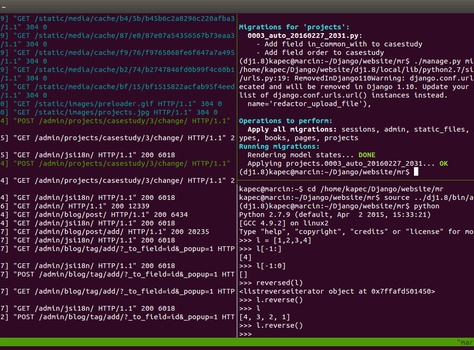

To check if the string was encoded properly we can create a file with the same content and run md5sum(Ubuntu).

$ echo "Hello world with hashlib.MD5" > test.txt

$ md5sum test.txt

36b7f7e7829894884f1d9a6b19b8bb8c test.txt

The output is the same as hexdigets(hexadecimal representation) from python code.

There is also one shortcut function implemented in md5 module directly.

>>> import md5

>>>

>>> md5.new("Hello world with hashlib.MD5\n").hexdigest()

36b7f7e7829894884f1d9a6b19b8bb8c

Checksum large files

Sometimes the file for checksum can be larger than the available RAM memory. If this happens, the file can not be checksummed whole at once. Luckily it's easy to load file in chunks and combine them into one final checksum.

>>> import hashlib

>>>

>>> md5_check = hashlib.md5()

>>> with open('test.txt', "rb") as f:

>>> for chunk in iter(lambda: f.read(5), b""):

>>> md5_check.update(chunk)

>>> return md5_check.hexdigest()

Hope it helps.